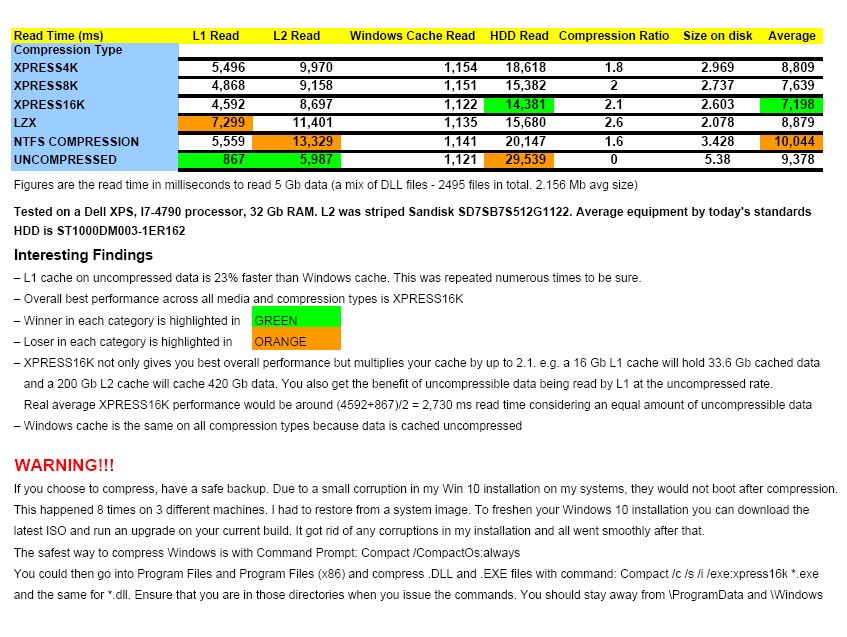

I ran some performance tests with PrimoCache and the various compression options in Windows (XPRESS4K, XPRESS8K, XPRESS16K, LZX and NTFS) and obtained some very interesting results.

Two findings that I want to mention up front are:

1. PrimoCache L1 cache performs 23% faster than Windows cache on reading uncompressed data!

2. You can get the best overall average performance and about DOUBLE the file data stored in L1 and on L2 (effectively doubling their size) with XPRESS16K compression.

CAUTION: Please be sure to know what you are doing if you are going to compress your data. Details of my disasters (and some advice) are below.

This image contains details and results of the performance tests and some more discussion below the image.

Further Discussion

If you want to calculate the actual read performance, just divide 5.38 Gb by the read times (milliseconds) in the table.

When you consider the very slow read time of the uncompressed data on the spinner, you can understand why Windows 10 and 11 drag so much on a regular HDD system. Even if you are not using any caching software, you will double your Windows performance just by compressing your data with the XPRESS16K algorithm.

Even though uncompressed data reads from L1 around five times faster than XPRESS16K, the large time difference in the column is for reading 5 Gb. In the general use of your PC, typical reads and writes (e.g. loading a program) might be in the order of a few hundred Mb. You do not generally perceive any slower performance between uncompressed and XPRESS16K data reading from L1. It feels about the same, and you get the benefit of double the data in your cache. This means that over long term use, you will hit the L1 and L2 cache about 10 to 15% more than using uncompressed data. I typically sit at around 93% cache hit rate (L1 and L2). But I do dedicate 24 Gb of my 32 Gb RAM to L1. In doing so, I effectively run a super-lightning-fast 8 Gb system. If I get low on memory (very rare) anything that would be paged to the disk is paged to L1 at L1 speeds.

The beauty of the new compression algorithms compared to NTFS compression is that they do not try to compress uncompressible files; so those files remain uncompressed on the disk. It does not have to decompress those files on the fly like it has to do with NTFS compression that tries to compress all data. Also, NTFS compression is subject to severe fragmentation. With NTFS, it is not uncommon to have 50,000 fragments after compressing a 2 or 3 Gb file. The new algorithms generally do not fragment the files when you compress them. You might get a few fragments here and there. Also understand that the new algorithms (XPRESS4K, XPRESS8K, XPRESS16K, LZX) are supposed to be used with data that is never modified, e.g. .DLL and .EXE files. If you compress regular data files they will compress but if you modify and save the file, they are saved uncompressed. That being said, if you have older data files that you don't access or modify any longer, an algorithm such as XPRESS16K is a great way to have them ready to read in native Windows format while having them occupy about half the space on your drive.

Hopefully, this shows that you can take advantage of compression features already built into Win 10 and 11 and get double the benefit from PrimoCache. It also should take the pressure off Romex Software from the daunting and delicate task of implementing compression into PrimoCache.